Welcome to the world of Technical SEO!

If you're looking to improve your website's visibility and performance, you've come to the right place. In this article, we will explore the key factors that can help you maximise your website's potential.

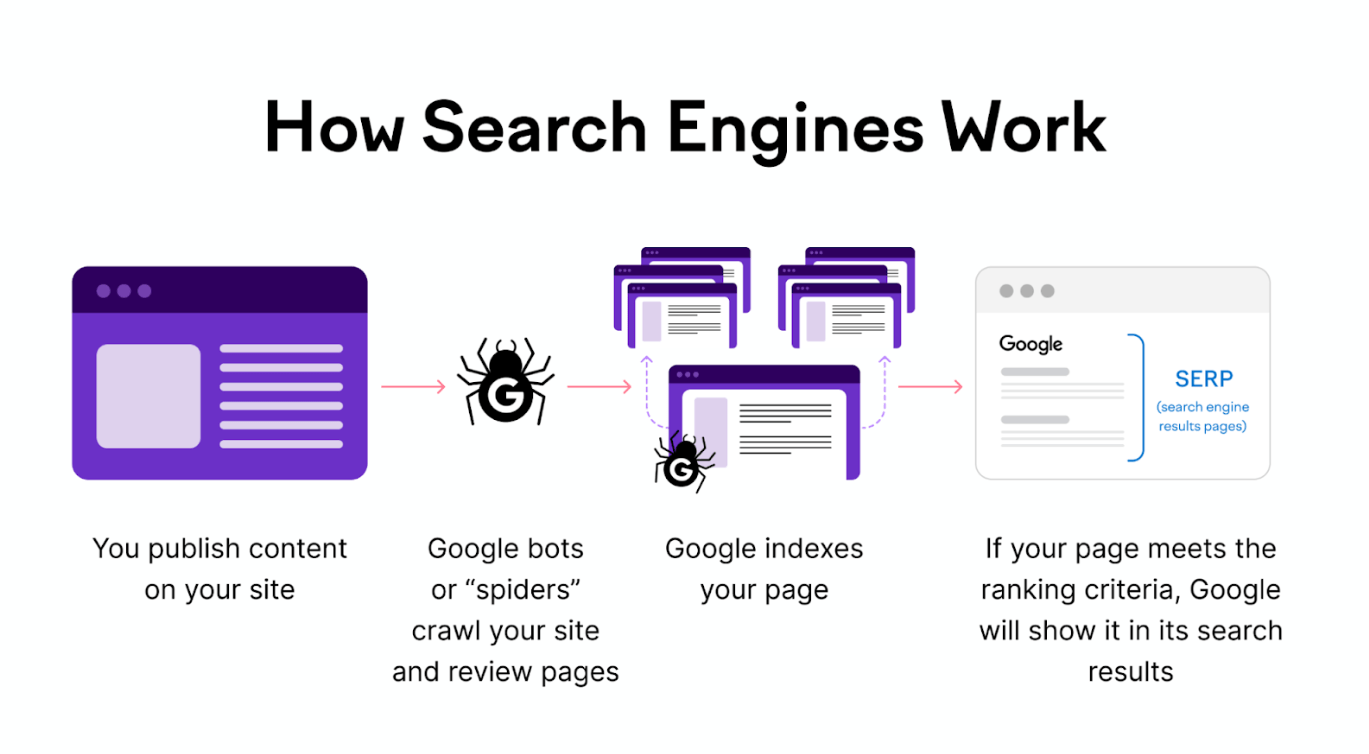

Technical SEO is vital; it guides search engine spiders in crawling and indexing your site efficiently. Beyond just boosting search rankings, it improves website performance, reducing bounce rates and amplifying conversions.

Let's dive in and unlock the full potential of your website’s search engine optimisation!

What is Technical SEO?

Technical SEO is like the behind-the-scenes work for a website.

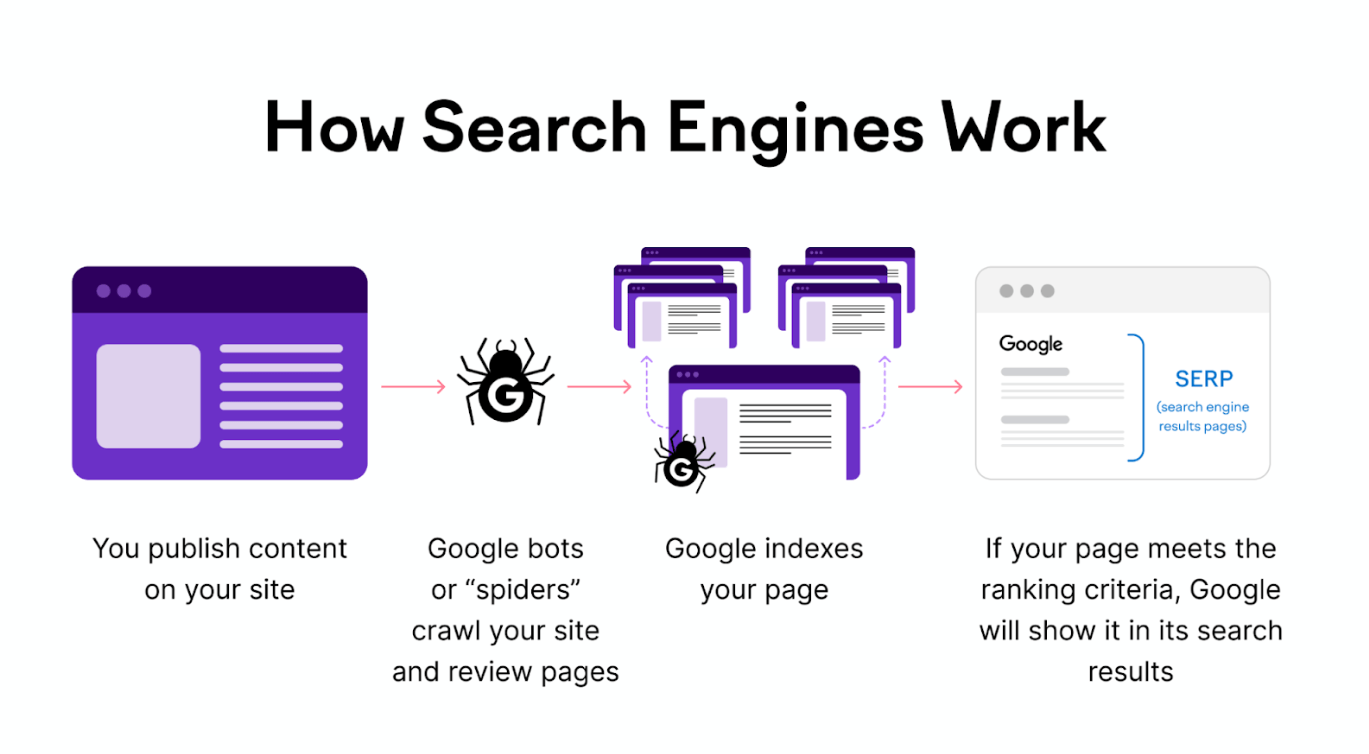

It's all the things we do to make sure search engines, like Google, can find and understand our site easily. When search engines understand a website better, that website often appears higher in search results.

Technical SEO plays a critical role in determining how search engine spiders crawl and index your site. By optimising the technical aspects of your website, you can enhance its visibility and ensure it ranks well in search engine results pages (SERPs).

From improving site speed and user experience to optimising meta tags and structured data, technical SEO covers a wide range of practices.

Why Is Technical SEO Important?

Technical SEO plays a critical role in determining how search engine spiders crawl and index your site.

Key components of technical SEO include optimising on-page elements like meta tags for better click-through rates and clarity for search engines. Furthermore, in today's mobile-dominant browsing era, a mobile-friendly site is essential.

Search engines rank mobile-optimised sites higher. If a mobile page takes over 3 seconds to load, over half of the visitors leave. By embracing responsive design and speeding up mobile load times, you enhance user experience and search rankings.

Without solid technical SEO, even great content can be overshadowed, affecting bounce rates and conversions. Think of it as ensuring the stage lighting is right for a play.

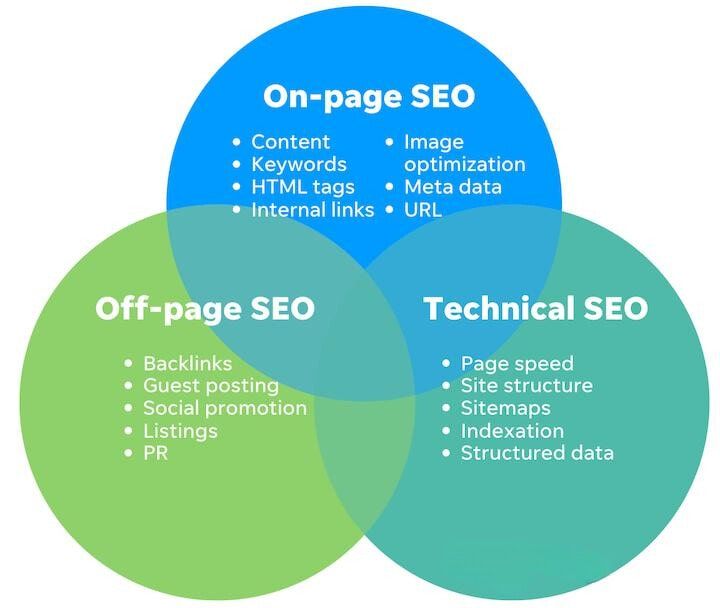

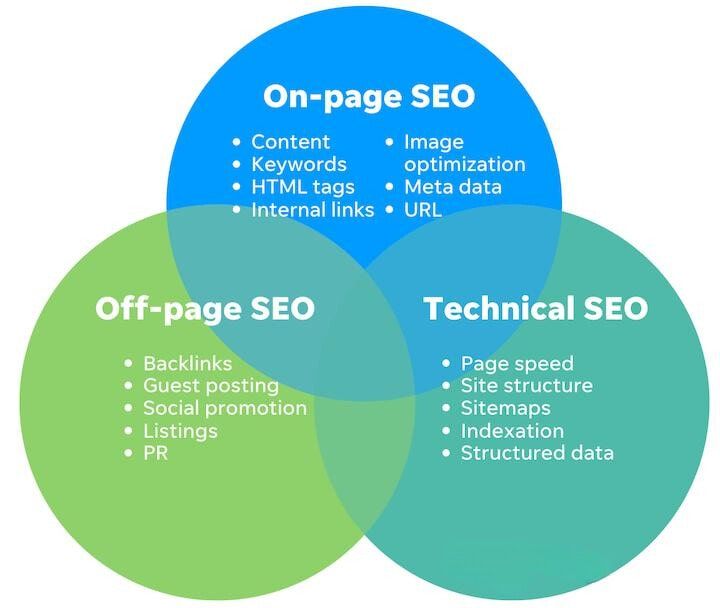

Technical SEO vs. On-Page SEO: What’s the Difference?

Technical SEO ensures everything runs smoothly. It's not about what's on your site but rather how your site works.

That includes:

- Performance: Making sure your site loads quickly. Nobody likes waiting, right? Things like choosing a good hosting service can affect this. If a site is slow, people may leave and check out another site.

- Responsive Design: Your site should look good on both computers and mobile devices. This helps people view your content easily, no matter what they're using.

- Other Elements: This includes ensuring your site is secure, can be understood by search engines, and even small things like picking a keyword for a website's address (though this is optional).

On the other hand, On-Page SEO is more about what's actually on your website – the content.

It focuses on:

- Keywords: This is about choosing the right words that people might use to find your content on search engines. A good mix of different types of keywords can help your site show up in search results.

- Content Quality: It's not just about using keywords; the content itself has to be good. It should be interesting, relevant, and organised. It's about keeping readers interested.

- User Experience (UX): This is about how your content is presented. Long, unbroken paragraphs can be hard to read. Good On-Page SEO ensures content is clear and enjoyable for visitors.

While both types of SEO are essential, they focus on different areas. Technical SEO is more about the website's functioning, while On-Page SEO centres on content and how users experience it.

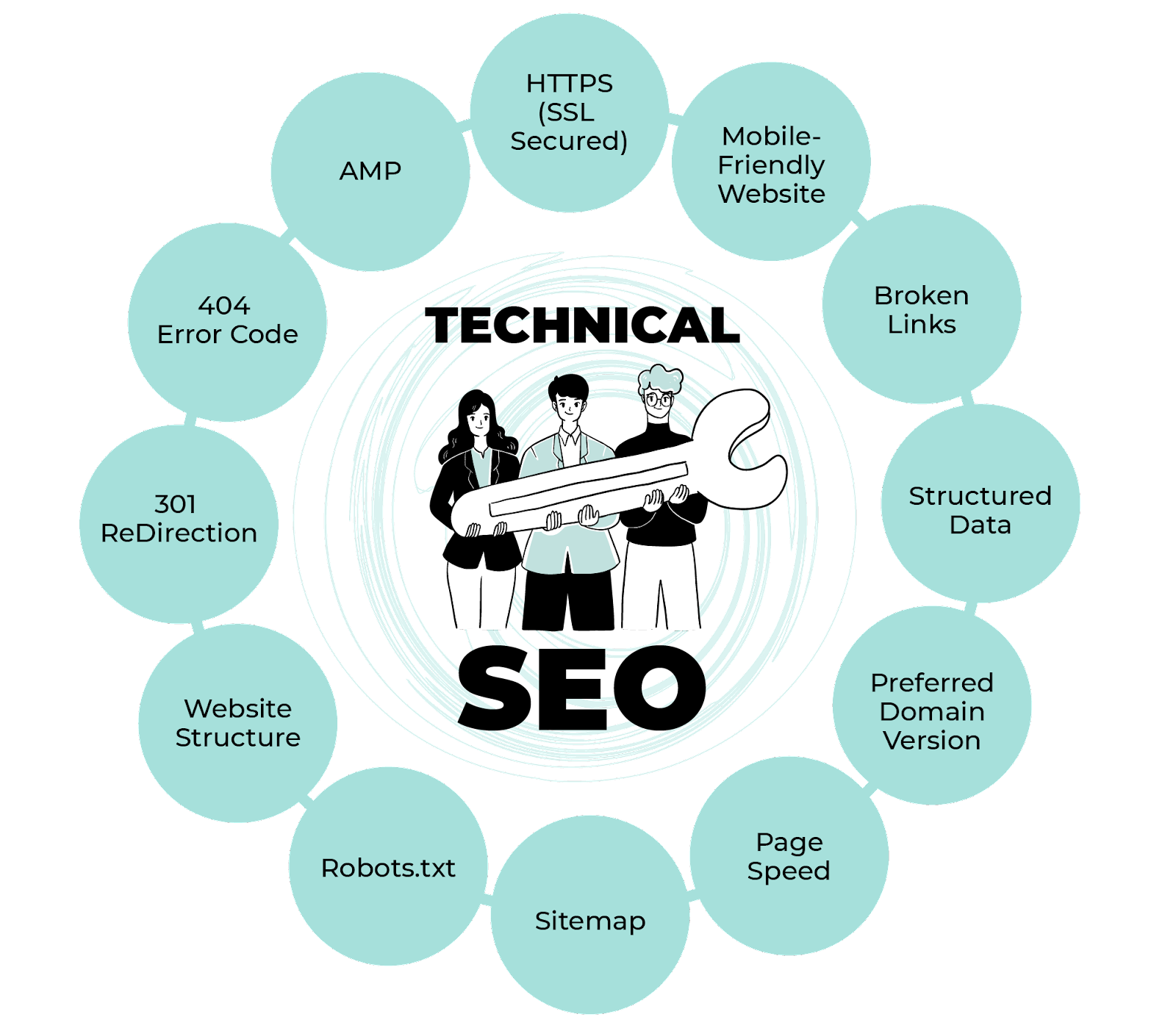

Technical SEO Key Factors for On-Page Optimisation

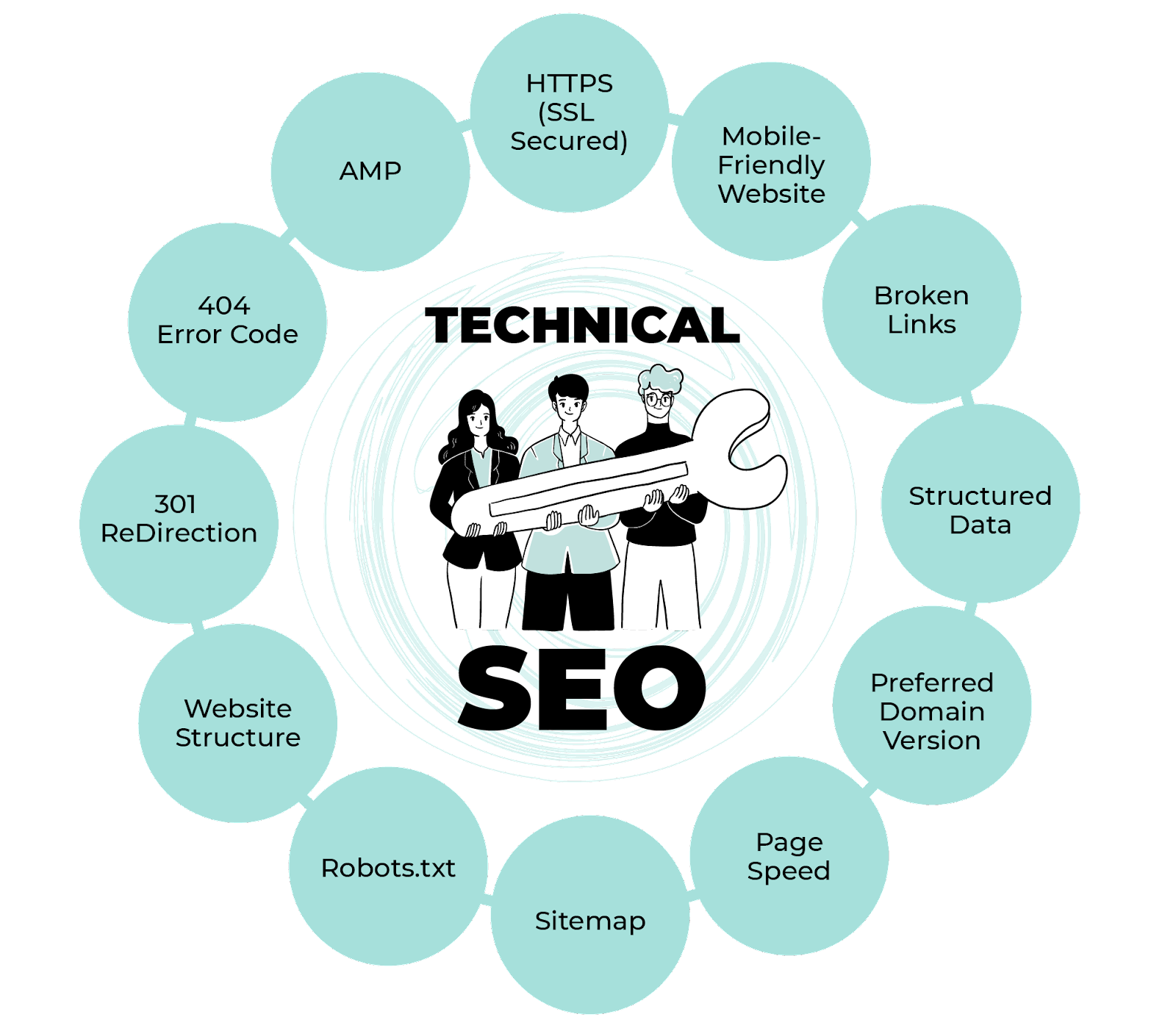

When working on Technical SEO, there are several factors that play a crucial role in making sure your website performs well.

These include:

- Crawlability

- Indexation

- Page Speed

- Mobile-friendliness

- Site Security (SSL)

- Structured Data

- Duplicate Content

- Site Architecture

- Sitemaps

- URL Structure

Let's delve deeper into what each of these factors means and why they're important.

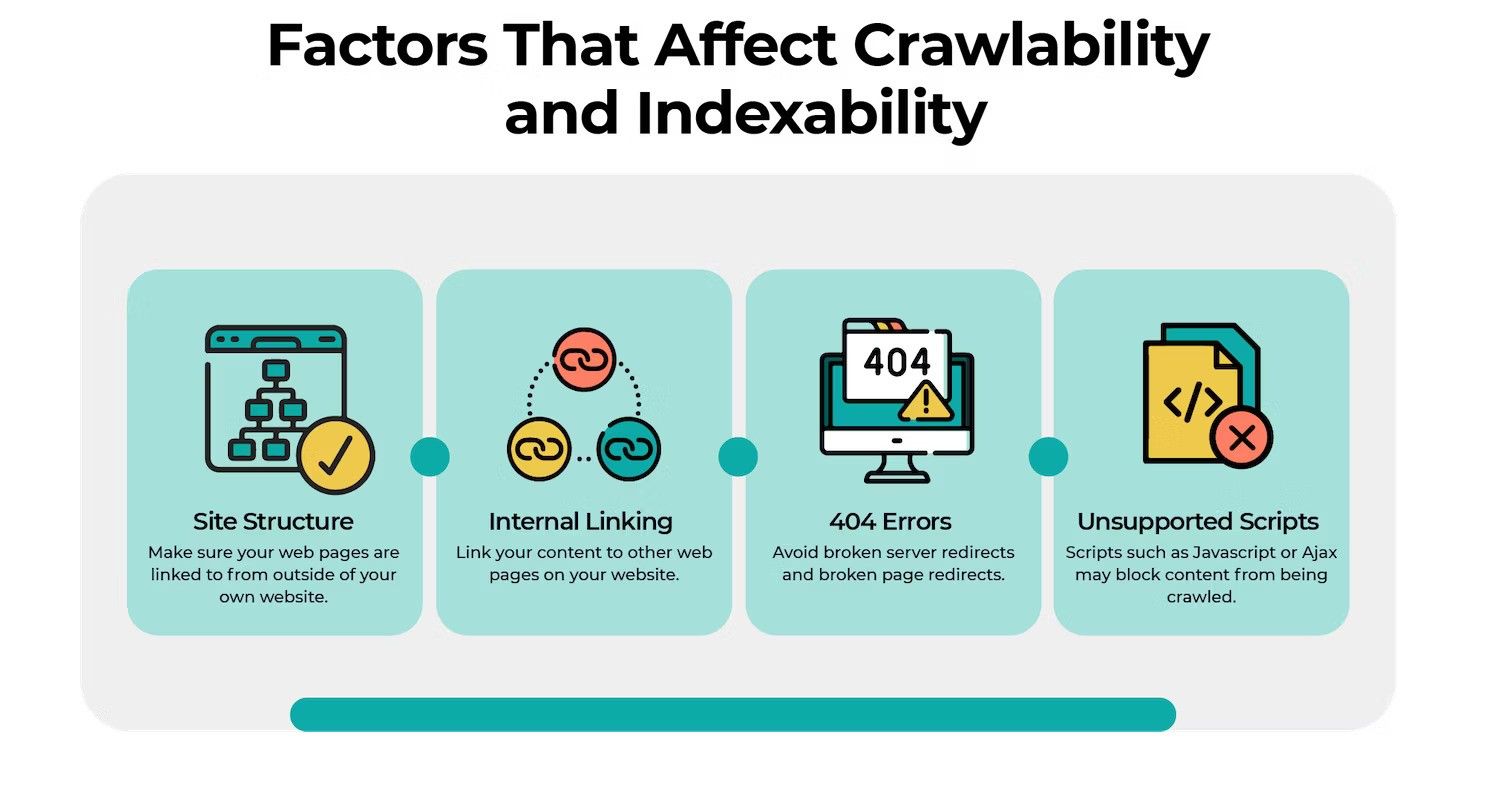

1. Website Crawlability

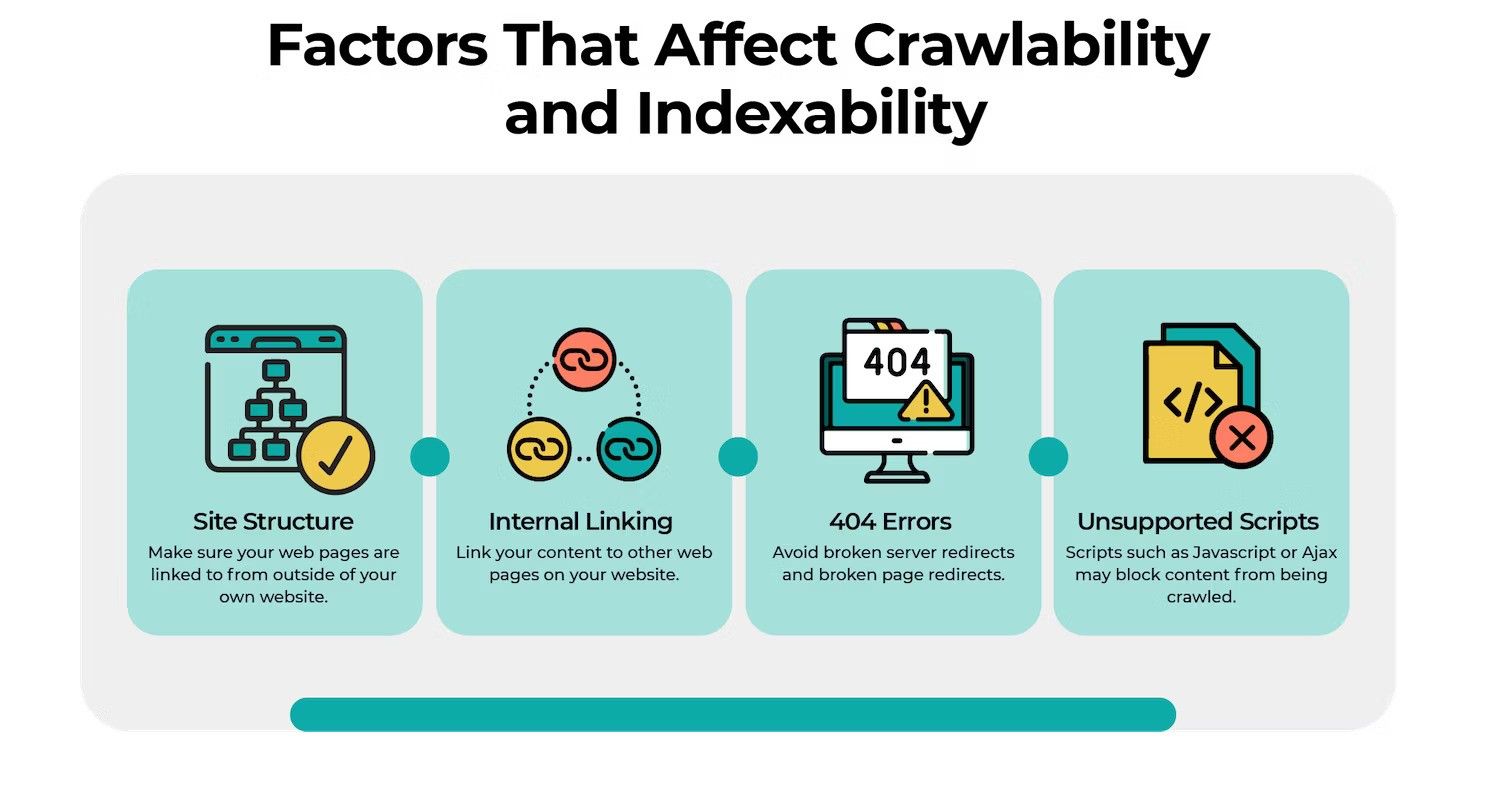

Crawlability is the process where search engines discover your webpages by following links.

It's like explorers using maps to find new places. If you want your content to appear in search results, search engines need easy access to them.

How to Improve Website Crawlability:

- SEO-Friendly Site Architecture: Think of your website's structure as its blueprint.

Every page should be easily accessible, ideally a few clicks away from the homepage.

This layout minimises the existence of orphan pages, which are pages without any internal links, making them hard for both users and crawlers to find.

- Sitemaps: Offer search engines a map—a sitemap.

It’s an XML file listing key pages on your site, guiding search engines on what's available for crawling.

2. Website Indexability

After crawling, search engines try to understand the content and store it in a massive database called the search index. For your content to appear in search results, it has to be in this database.

How to Check Website Indexability:

-

Go to the URL Inspection tool and simply enter the complete URL to inspect.

-

If specific pages are not indexed, the report will also provide reasons, helping you understand and resolve potential obstacles.

Learn detailed steps here.

- Direct Search on Google: Alternatively, you can perform a simple search on Google:

-

Open Google search bar and type site: followed by your domain.

For instance, site:www.yourwebsite.com

-

The search results will display the number and list of pages from your site that Google has indexed.

If only a few or none of your pages appear, there might be indexation issues that need addressing.

3. Page Loading Speed

Website speed is crucial not just for search engines but also for user experience.

A faster website can lead to better engagement, higher conversion rates, and improved rankings in search results.

How to Boost Website Speed?

-

Optimise Your Website's Code: Simplifying and streamlining your website's code can significantly boost its speed.

This involves minifying elements like CSS, JavaScript, and HTML and eliminating render-blocking JavaScript.

-

Leverage Caching Techniques: Caching stores frequently-used data temporarily so the website doesn't have to reload it every time a user visits.

By leveraging browser and server-side caching, you can reduce server load and translate into quicker page load times.

-

Minimise External Scripts: External scripts, such as ads, font loaders, or lead form builders, can slow down your website.

To manage this use essential scripts only and load them asynchronously, so they don't hinder other elements' loading.

Optimise Your Website: 6 Proven Methods to Boost Speed and Efficiency

4. Page Mobile-Optimisation

Mobile optimisation ensures that a website functions and looks as intended on various mobile devices.

Implementing responsive design means the website's layout, images, and functionalities adjust automatically to fit the device's screen size, be it a smartphone, tablet, or desktop.

This offers a consistent and user-friendly experience across all platforms.

How To Make Website Mobile Friendly?

-

Responsive Design & Viewport: Adopt a flexible design that automatically adjusts to different screen sizes.

Incorporate the viewport meta tag to control page width and scaling on mobile.

-

Speed Optimisation: Enhance mobile loading times by optimising images, leveraging browser caching, and minimising redirects.

-

Optimised User Experience: Minimise pop-ups, utilise larger fonts (at least 15px) for readability, and design touch-friendly buttons with ample spacing for easy interaction on mobile devices.

-

Clean and Streamlined Design: Prioritise a clutter-free mobile interface by trimming non-essential sections and ensuring content remains straightforward and relevant for users.

-

Google Search Console Checks: Regularly monitor the mobile usability reports to spot and rectify any mobile usability issues.

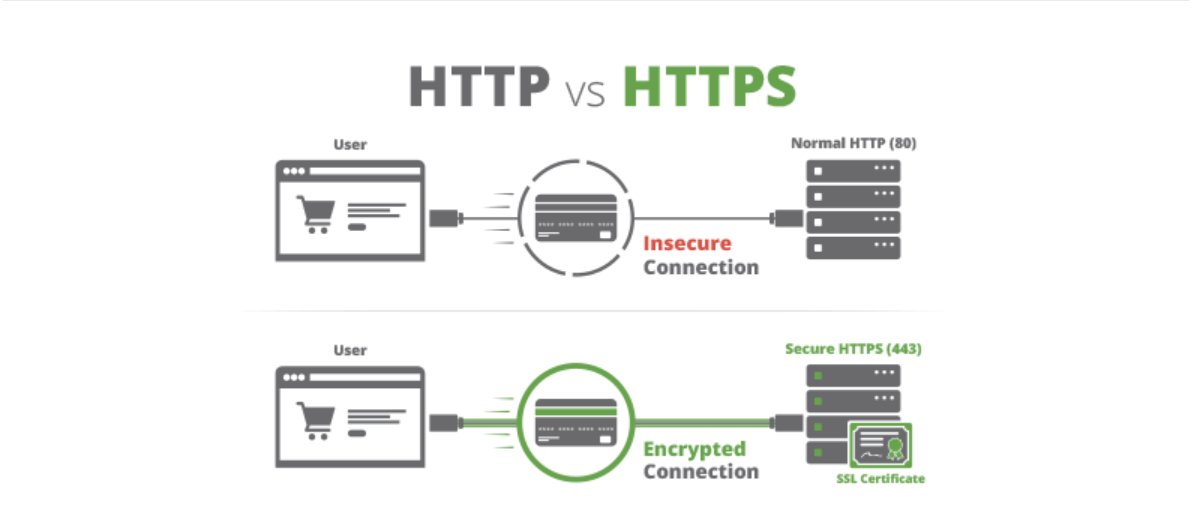

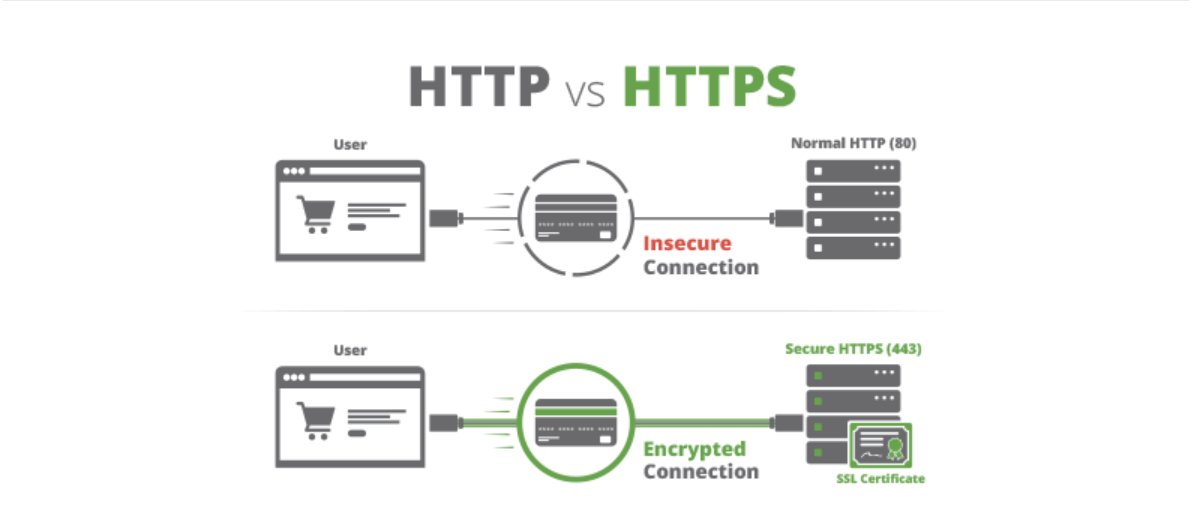

5. Website Security (SSL)

Website security revolves around ensuring a safe browsing experience for users, primarily by protecting personal data like names, addresses, login credentials, and credit card information from potential interception.

It directly impacts search engine rankings, as Google boosts rankings for sites with SSL. Without SSL, browsers show a "Not Secure" warning, discouraging visitors.

At the forefront of this is the SSL (Secure Sockets Layer) protocol. An SSL certificate encrypts the connection between a website and its visitors, safeguarding data transfers, such as form submissions or login credentials.

To ensure website security, you must prioritise acquiring and properly installing an SSL certificate.

How to Secure Your Website:

-

Purchase SSL: Acquire an SSL certificate from reputable providers like GoDaddy, BlueHost, Wix, SquareSpace, or similar.

-

Install SSL: Follow your hosting provider's instructions or guidelines to correctly install and configure the SSL certificate for your website.

-

Verify Installation: Check your website's URL to see if it begins with "https://". Also, look for the padlock icon in the browser's address bar.

Sites with SSL show "https://" before their URL and often feature a padlock icon in the browser, indicating the secure connection.

-

Regularly Update: SSL certificates have expiration dates. Set reminders to renew them before they expire to maintain a secure website connection.

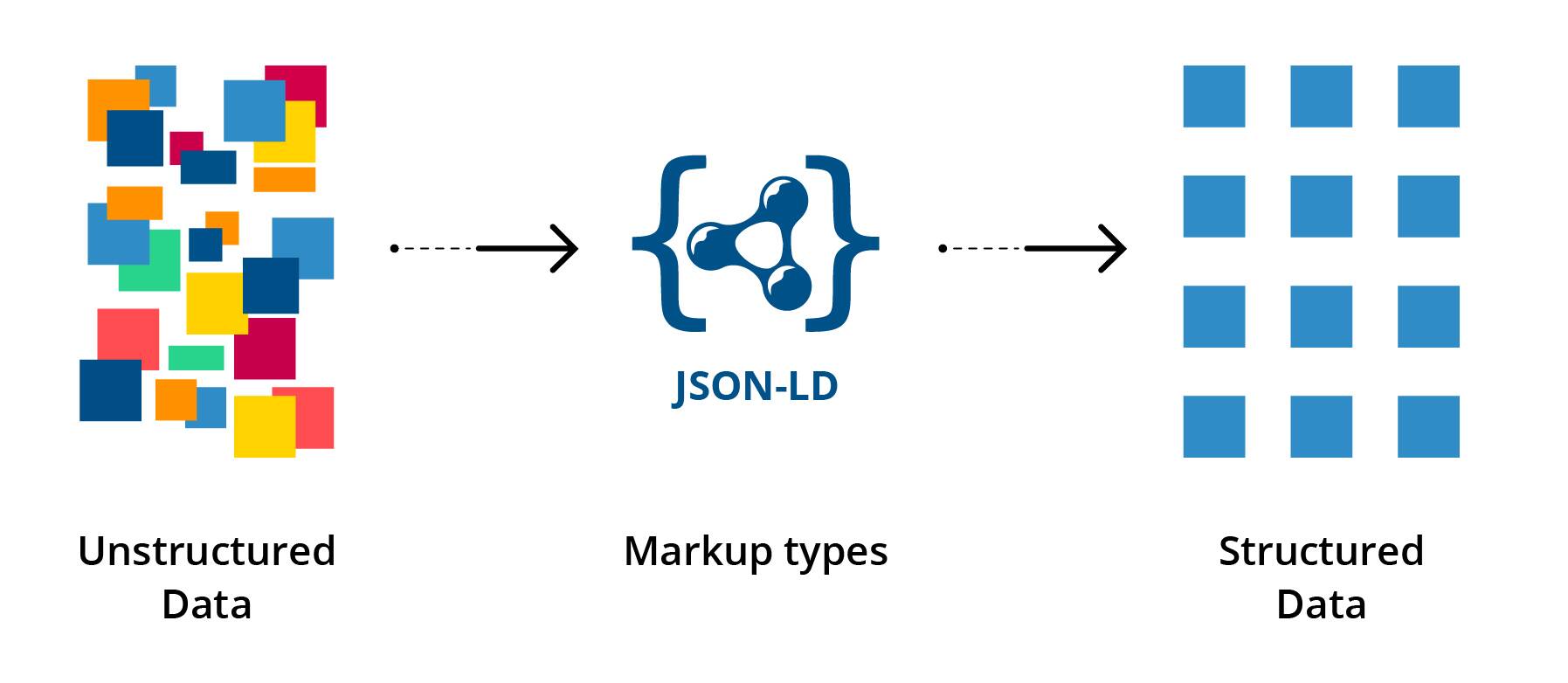

6. Website Structured Data

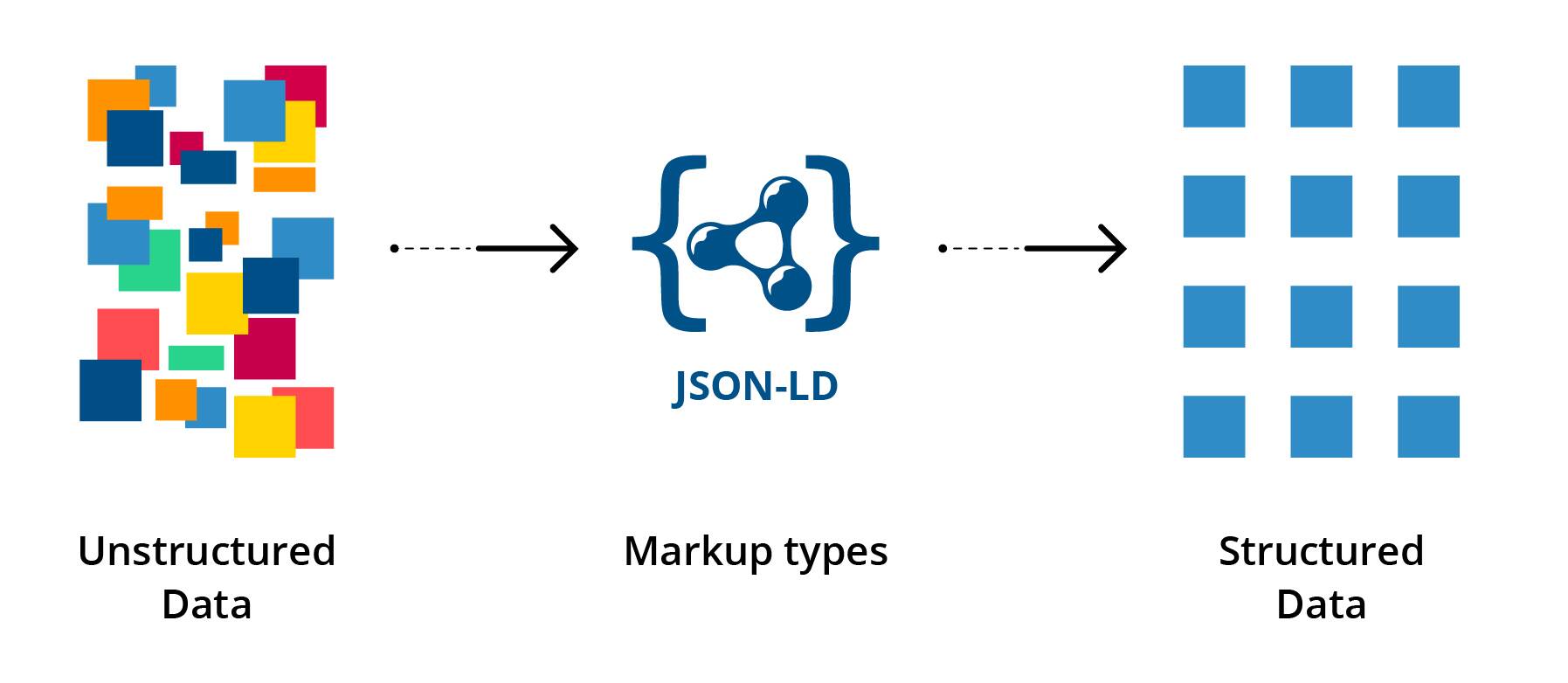

Structured data refers to a standardised format for providing information about a page and classifying its content.

Google and other search engines use structured data to generate rich snippets, which are enhanced search results displaying additional, relevant details beneath the title and description. These rich snippets can significantly improve the page's visibility and click-through rate (CTR) in search results.

How to Implement Structured Data?

-

Determine Relevance: Decide on the type of structured data markup appropriate for your content (e.g., "Product" for e-commerce product pages).

-

Choose the Right Markup: Google supports various structured data markups. Use one that aligns with your page's content.

-

Use Tools: Use structured data generator tools to create the appropriate JSON-LD code without writing it manually.

-

Implementation for CMS: For platforms like WordPress, plugins such as Yoast SEO can simplify the process of adding structured data.

-

Embed Code: Insert the structured data script into the relevant page's HTML.

-

Test and Validate: After implementation, use tools like Google's Structured Data Testing Tool to ensure the markup is correctly implemented.

-

Monitor in Search Console: Google Search Console provides reports on how your structured data is performing and alerts for any issues.

7. Page Duplicate Content

Duplicate content refers to content that either matches other content exactly or is very similar, and can be found across multiple pages on a single site or across various sites.

This can lead to issues such as confusing search engines about which version of the content to show in the search results, diluting backlink value, and wasting crawl budget.

Google does not directly penalise sites for duplicate content, but it can affect a site's search performance negatively.

How to Fix Duplicate Content?

-

Recognise the Issue: Understand the difference between exact and near-duplicate content.

Content that is very similar, with just a few words changed, can still be considered duplicate.

-

Site Taxonomy: Review your website's structure and content organisation.

Ensure each page has a unique H1 and focus keyword to prevent overlap.

-

Canonical Tags: Use the rel=canonical tag to specify the "master" version of a page if duplicates are necessary or unavoidable.

This tells search engines which version of a page they should consider as the primary one.

-

Meta Tagging: Implement the 'noindex' meta robots tag on pages you don't want to appear in search results.

This can prevent certain duplicate content from being indexed by search engines.

-

Handle URL Parameters: Use tools like Google Search Console to inform search engines how to handle URL parameters, which can often result in duplicate content.

-

Manage Duplicate URLs: Ensure a consistent URL structure to prevent issues like "www vs. non-www" or "HTTP vs. HTTPS."

Implement redirects if necessary.

-

Implement Redirects: Use 301 redirects to send users from duplicate content pages to the primary version.

Always redirect to the higher-performing or more authoritative page.

-

Regular Monitoring: Regularly check for duplicate content using tools and crawlers.

Monitoring helps in identifying and rectifying any unintended duplication quickly.

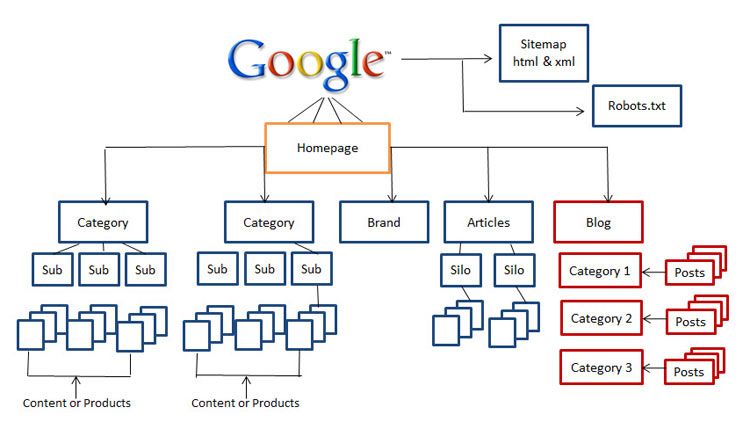

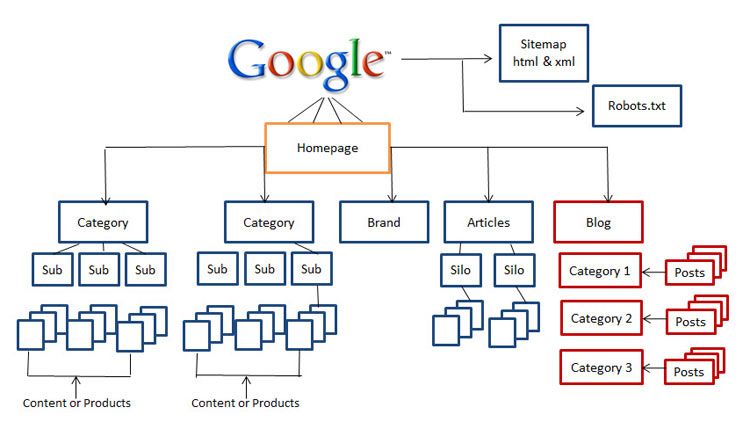

8. Website Architecture

Website architecture refers to the systematic organisation of a site, ensuring visitors can easily navigate from a broad topic (homepage) to specific content (like a blog post).

A well-structured site helps users quickly find what they need and signals to search engines the significance and interrelation of pages.

Google advises that every page should be accessible via links without necessitating internal search functions.

How to Ensure Optimal Page Structure?

-

Flat Structure: Ensure most pages are 1-3 clicks away from the homepage.

Relying solely on a navigation menu isn't enough; Google emphasizes that one should be able to move from any URL to another just by following on-page links.

-

Breadcrumb Navigation: Breadcrumbs help visitors recognise their location on a site.

It's inspired by Hansel and Gretel's breadcrumb trail, ensuring users always have a clear path back to the homepage.

-

XML Sitemap: This serves as a roadmap for search engines to crawl and index your site.

Sitemaps are crucial for larger sites, new sites, those with unlinked content sections, or sites rich in media.

-

URL Structure: Clean and logical URLs matter. Google recommends simple, short URLs, using hyphens for clarity, employing https:// for security, and avoiding unnecessary stop words or date stamps.

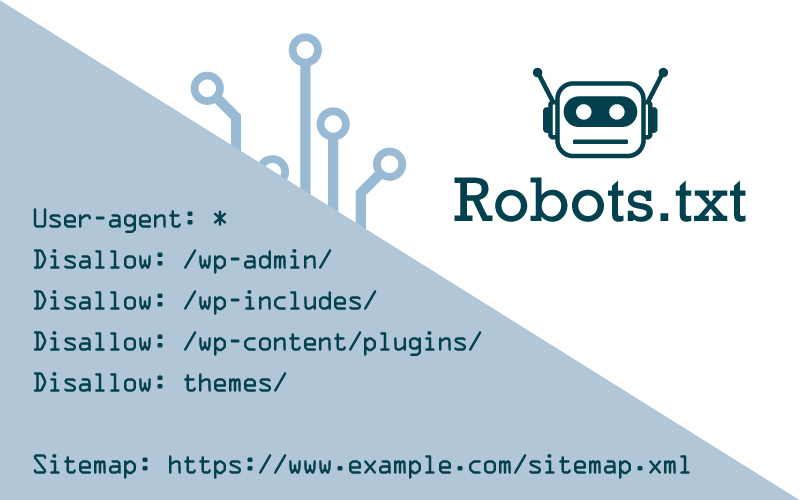

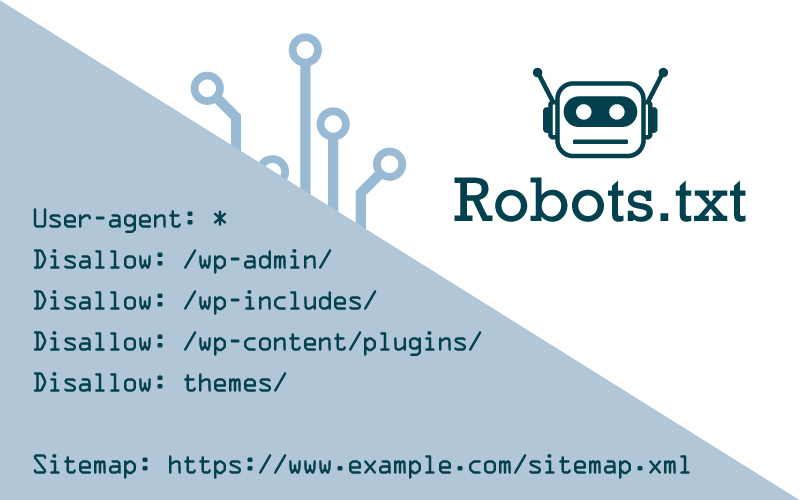

9. Robots.txt File

Robots.txt is a crucial file placed on your website (e.g., example.com/robots.txt) directing how web crawlers, like search engines, should interact with your site's pages.It can specify which pages to crawl or avoid and even guide crawlers to your sitemap.

Properly configuring this file ensures that search engines understand which content you deem important or wish to keep private. In essence, robots.txt offers a strategic way to communicate with web crawlers, ensuring your site's vital content is accessed and prioritised correctly.

How to Implement Robots.txt file?

-

Syntax Guide:

- Block all crawlers from your site:

User-agent: * Disallow: /

- Permit all crawlers access:

User-agent: * Allow: /

- Restrict specific crawlers from certain pages:

User-agent: Googlebot Disallow: /seo/keywords/

-

Page-Level Control: To prevent search engines from indexing specific pages, use the meta=robots HTML tag within the <head> section of the page. For instance:

- To stop Google from indexing a specific page but allow link following:

<meta name="robots" content="noindex,follow" />

- To also prevent link following:

<meta name="robots" content="noindex,nofollow" />

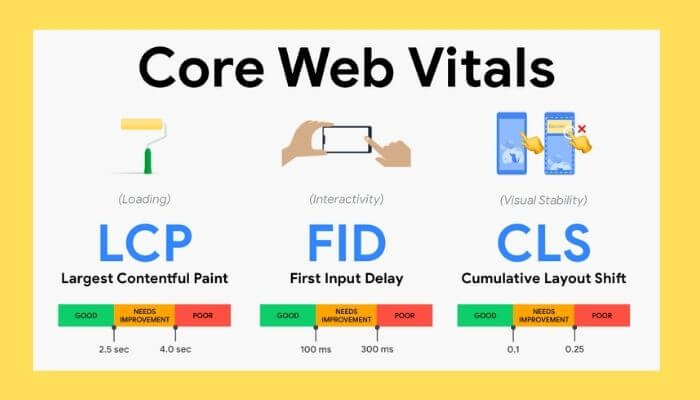

10. Core Web Vitals

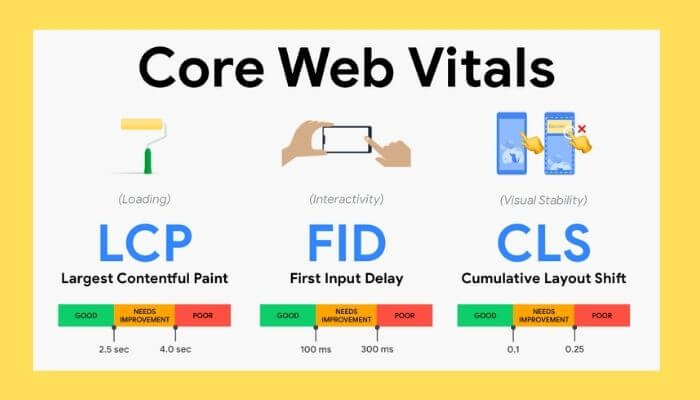

Core Web Vitals are Google's metrics aimed at improving website user experiences. They measure crucial aspects of web performance, emphasizing loading, interaction, and visual stability.

Sites that excel in these areas provide a smoother, faster, and more responsive user experience, which Google considers when ranking pages in search results.

Three Key Elements:

- Fast loading (LCP): Web pages should load their largest content element within 2.5 seconds.

- Interaction with website (FID): After a user interacts (like clicking a button), the site should respond in 100ms or less.

- Stable visuals (CLS): Minimise content shifts on the screen, maintaining a CLS score under 0.1.

How to Improve Core Web Vitals:

-

Analyse: Use tools like Google's PageSpeed Insights or Web Vitals Chrome Extension to gauge your site's performance.

-

Optimise Content Delivery: Leverage content delivery networks (CDNs) and optimise images and scripts.

-

Prioritise Loading: Load critical content first, then secondary content. Consider using lazy loading for non-essential media.

-

Limit Dynamic Elements: Reduce or control ads, pop-ups, or other elements that can disrupt visual stability.

-

Review and Iterate: Regularly check your metrics and make necessary adjustments for continuous improvement.

Embracing Technical SEO for Sustainable Success

Technical SEO is vital for optimal website performance and search visibility. By leveraging the discussed strategies, you can enhance organic reach and stay adaptable in the face of evolving algorithms.

Remember to regularly monitor and analyse your website's performance using tools like Google Analytics and Google Search Console.

Continuously adapt and optimise your technical SEO strategy to keep up with the ever-changing search engine algorithms and user expectations.

Partner with Cogify: Your SEO Success Awaits

Navigating the complex world of SEO can be daunting. Leave the technicalities to us and focus on what you do best.

If unlocking the full potential of your website is your goal, then Cogify is your solution. Reach out today and let's make SEO success your next big milestone.